How NLP Handles Medical Jargon in Multiple Languages

Medical NLP systems face unique challenges when processing jargon across languages. Here's why it's tricky and how solutions are evolving:

- Medical Jargon Complexity: Terms like "paracetamol" vs. "acetaminophen" vary by region. Abbreviations like "PCR" differ by context. Models must distinguish between technical terms (e.g., "neoplasm") and simpler ones (e.g., "tumor").

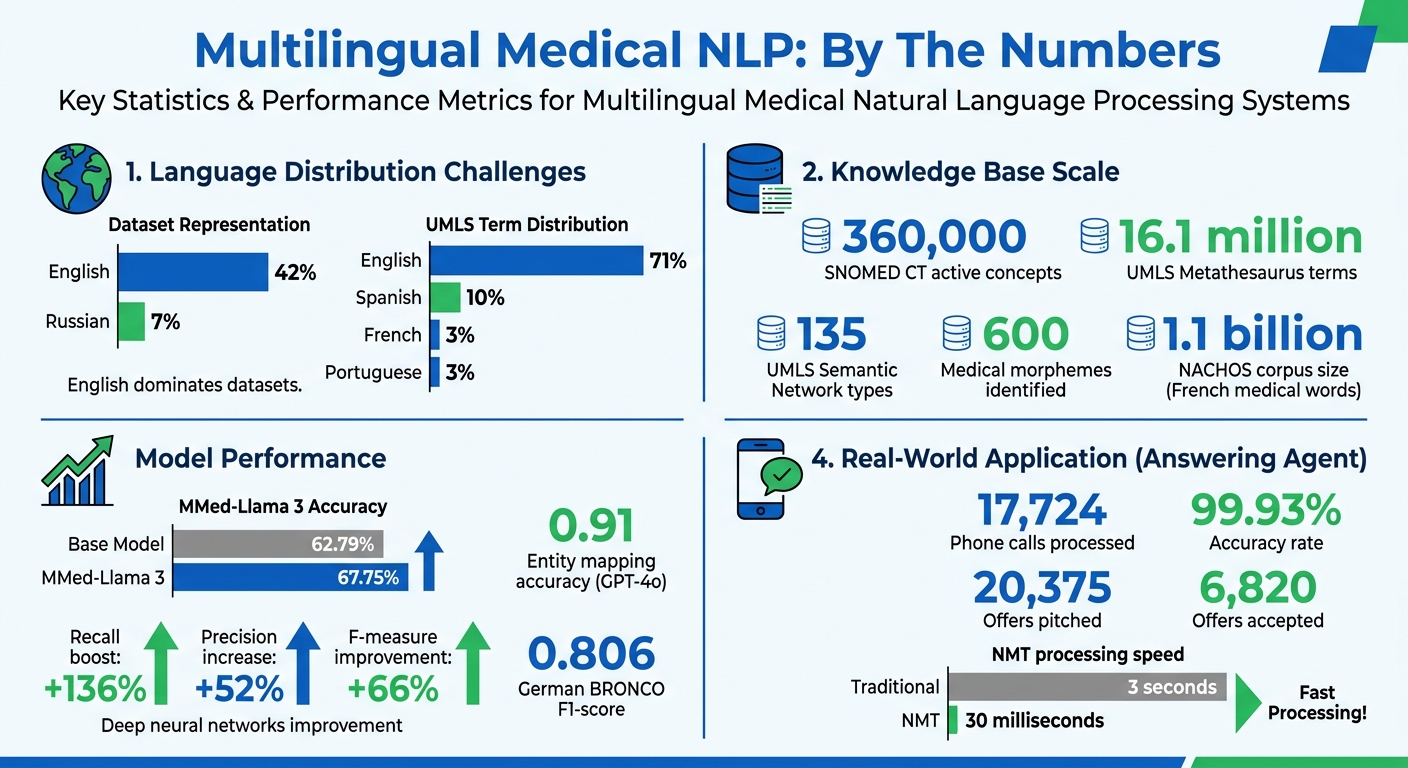

- Multilingual Challenges: English dominates datasets (42%), leaving other languages like Russian (7%) underrepresented. False cognates (e.g., Spanish intoxicado vs. "intoxicated") can cause serious errors.

- Data Gaps: Non-English clinical datasets are limited, making high-precision training costly. Medical terminology evolves rapidly, with databases like SNOMED CT needing constant updates.

Key Solutions:

- Specialized Tokenization: Morpheme-enriched tokenizers improve accuracy by respecting medical word roots (e.g., "céphal-" for "head").

- Multilingual Models: Fine-tuned models like MMed-Llama 3 outperform general-purpose ones in medical tasks.

- Knowledge Bases: Tools like UMLS and SNOMED CT standardize terms across languages using unique concept IDs.

- Translation Pipelines: Round-trip translation leverages rich English resources to process non-English texts accurately.

Results: Systems like Answering Agent achieve 99.93% accuracy in multilingual medical phone interactions, ensuring patient care transcends language barriers.

Multilingual Medical NLP: Key Statistics and Performance Metrics

Introduction to Healthcare NLP/LLMs

sbb-itb-abfc69c

Core NLP Techniques for Medical Terminology

Processing medical terminology requires specialized methods to handle its complexity, particularly when dealing with multilingual data. Let’s dive into some of the key techniques used to tackle this challenge.

Tokenization and Lemmatization for Medical Terms

Tokenization, or breaking text into smaller units, becomes tricky with medical terms. Common methods like Byte-Pair Encoding (BPE) and SentencePiece often split words based on frequency patterns, which can lead to awkward and inconsistent segments. For instance, a term like "cephalalgia" might be broken into parts that don’t align with its linguistic roots.

"Despite the agglutinative nature of biomedical terminology, existing language models do not explicitly incorporate this knowledge, leading to inconsistent tokenization strategies for common terms." - Yanis Labrak et al.

To address this, morpheme-enriched tokenization incorporates linguistic rules to ensure splits happen at meaningful points. Medical terms, often derived from Greek and Latin roots (e.g., céphal- for "head" and -algie for "pain"), benefit from predefined morphemes. Researchers have manually identified around 600 commonly used medical morphemes to guide tokenizers. For example, the NACHOS corpus, which includes 1.1 billion French medical words, shows how domain-specific training improves tokenization accuracy compared to general-purpose approaches.

When it comes to normalization (similar to lemmatization), tools like xMEN use cross-lingual candidate generation to map different forms of a term to standardized concept IDs in databases like the Unified Medical Language System (UMLS). By leveraging English aliases, xMEN can normalize medical entities in lower-resource languages, effectively bridging linguistic gaps.

From tokenization to normalization, these techniques set the stage for multilingual advancements.

Multilingual Models for Medical Texts

General-purpose multilingual models often falter with specialized medical terminology unless they undergo domain-specific training. The Multilingual Medical Corpus (MMedC), containing over 25.5 billion medical tokens across six languages, is a key resource for such adaptations.

One effective strategy is cross-lingual transfer (CLT). Here, models are fine-tuned on medical tasks in one language (usually English) and then applied to other languages without further training. For example, the MMed-Llama 3 model, with 8 billion parameters, achieved 67.75% accuracy on the MMedBench multilingual medical benchmark, outperforming the base Llama 3 model's 62.79%. Advanced models like GPT-4 show even higher accuracy in handling medical tasks.

These models employ multiple approaches to improve performance:

- Hybrid candidate generation: Combines TF-IDF for basic string matching with SAPBERT (a multilingual biomedical model) for semantic understanding via pre-trained embeddings.

- Cross-encoder ranking: Evaluates the medical entity and its context to resolve ambiguities. This is crucial for distinguishing between terms like "Paget's disease of the bone" and "Paget's disease of the breast".

For languages with limited medical datasets, weakly supervised learning via translation offers a workaround. By machine-translating English datasets and projecting entity annotations onto the translated text, this method creates training data where none previously existed. While not perfect, it enables NLP systems to function effectively in resource-scarce languages.

These innovations are transforming how medical terminology is processed, making it more accessible across languages and contexts. This is particularly valuable for organizations requiring 24/7 global coverage for patient inquiries.

Using Medical Ontologies and Knowledge Bases

Specialized knowledge bases, combined with advanced tokenization and multilingual models, play a key role in standardizing medical terminology across different languages. These resources help NLP systems recognize that terms like "heart attack" in English, "infarto de miocardio" in Spanish, and "infarctus du myocarde" in French all describe the same medical condition.

Standardizing Medical Terminology Across Languages

Resources like the Unified Medical Language System (UMLS) and SNOMED CT take a concept-based approach to standardization. Instead of focusing on individual terms, they assign a unique Concept Unique Identifier (CUI) to each medical concept, which links its variations across multiple languages.

"The principle of concept-based translation must be applied: Translations should never be literal (i.e. should not start from the term to be translated), but should always be based on the Fully Specified Name (FSN), which describes the meaning of the term in natural language." - SNOMED International

The international edition of SNOMED CT includes around 360,000 active concepts, while the UMLS Metathesaurus contains over 16.1 million terms. However, English dominates these resources, accounting for 71% of UMLS terms, with Spanish at 10%, French at 3%, and Portuguese also at 3%. For languages with fewer resources, linking terms through English aliases has proven effective for normalization.

The UMLS Semantic Network organizes concepts into 135 semantic types. This allows NLP systems to classify terms into categories like "Disease or Syndrome", regardless of whether the input is in German, Dutch, or Italian. Such standardization significantly improves entity linking and overall performance, as shown in recent studies on integration.

Improving NLP Accuracy with Knowledge Integration

By integrating standardized terminology from knowledge bases, NLP systems enhance their ability to identify and interpret medical entities. In January 2026, Sara Mazzucato and her team at EPFL applied GPT-4o to the ShARe/CLEF eHealth corpus for multilingual named entity recognition (NER) and biomedical entity linking. They translated the English-annotated corpus into Dutch and Italian using an annotation-preserving method, achieving a 0.91 accuracy rate in mapping entities to UMLS CUIs.

"Prompt-based LLMs can effectively perform NER and BEL in languages with less annotated resources, even with limited annotated training data." - Sara Mazzucato, EPFL

Modern NLP pipelines combine traditional string matching with semantic models like SAPBERT and cross-encoder ranking to resolve ambiguities. For example, the cross-encoder evaluates both the medical term and its surrounding context, which is critical for distinguishing between conditions like "Paget's disease of the bone" and "Paget's disease of the breast".

Incorporating knowledge base synonyms, a technique called "UMLS injection", further refines model accuracy. This process aligns semantically equivalent terms that might differ syntactically due to translation or regional variations. Studies show that using deep neural networks for cross-lingual concept normalization can lead to a 136% boost in recall, a 52% increase in precision, and a 66% improvement in F-measure compared to traditional string-matching methods.

These advancements enhance tools like Answering Agent, which supports high-accuracy, multilingual phone interactions for medical practices.

Translation and Cross-Lingual NLP Pipelines

When working with multilingual medical texts, round-trip translation offers a structured way to map medical entities across languages. This method translates non-English clinical text into English, processes it using specialized medical NLP tools, and then translates the identified entities back into the original language. The advantage? English has a much richer set of medical terminology and annotated resources compared to other languages, making it a powerful intermediary for processing clinical data. Let’s dive into how machine translation and span alignment refine this process for multilingual medical term handling.

Machine Translation for Medical Texts

Amazon Web Services (AWS) provides an example of how machine translation can streamline medical text processing. In their pipeline, clinical notes stored in Amazon S3 trigger AWS Lambda, which integrates Amazon Translate and Amazon Comprehend Medical. The workflow translates clinical text into English, extracts medical entities like medications, dosages, and diagnoses, and then translates these entities back into the original language. Finally, the processed data is stored in Amazon Athena and visualized using Amazon QuickSight. Automatic language detection ensures the system can handle datasets in multiple languages seamlessly.

Speed is a critical factor in clinical settings. Neural Machine Translation (NMT) offers a significant advantage, identifying semantic concepts in just 30 milliseconds, compared to the 3-second lag of traditional methods like regular expressions. However, accuracy hinges on domain-specific fine-tuning. For example, after being fine-tuned with medical bilingual corpora, smaller models like Marian (7.6 million parameters) have been shown to outperform much larger models such as NLLB (1.3 billion+ parameters).

"Extra-large MMPLM does not necessarily win over the small-sized MPLM on clinical domain MT via fine-tuning." - Lifeng Han et al.

But translation alone isn’t enough - accurate mapping back to the original text is essential.

Cross-Lingual Entity Detection and Validation

Once translation is complete, the next step is ensuring that the identified medical terms align correctly with their original positions in the source text. This is where precise span alignment comes into play. By tracking where each medical term appears in both the translated and original versions, cross-linguistic span alignment ensures that entities are accurately re-anchored. For instance, using the German BRONCO dataset, this method achieved an F1-score of 0.806 for detecting "medication" entities.

Ambiguous terms add another layer of complexity. To resolve these ambiguities, context-aware cross-encoders analyze both the medical term and its surrounding text.

For languages with limited resources, weakly supervised training steps in. This approach projects English annotations onto machine-translated datasets, creating training data for the target language. A practical example is the modular xMEN toolkit, which leverages English aliases from the UMLS database to generate candidates for medical entities, even when labeled data for the target language is unavailable.

Answering Agent's Multilingual Medical NLP Capabilities

High-Accuracy NLP for Phone Interactions

Answering Agent processes over 17,724 phone calls with an impressive 99.93% accuracy, thanks to its advanced multilingual NLP capabilities. These results stem from the use of specialized architectures like Medical mT5, which is tailored for healthcare applications and includes models with up to 3 billion parameters.

The system incorporates standardized vocabularies such as SNOMED and trains on a wide range of medical data sources, including PubMed abstracts, ClinicalTrials.gov, drug instructions, and medical theses. This allows it to recognize both technical terms like "hypertension" and everyday phrases like "high blood pressure."

Additionally, the AI automatically detects the caller's language in real time, smoothly handling scenarios where callers switch between languages. It ensures critical information - such as names, symptoms, and appointment details - is preserved without losing context. These features make it a dependable tool for handling complex medical conversations.

How Medical Practices Use Answering Agent

Answering Agent's multilingual NLP and domain-specific training make it an essential tool for diverse medical practices. The platform automates the entire call process - from welcoming patients to booking appointments and sending confirmations in their preferred language - operating 24/7 to handle routine tasks. It also manages unlimited simultaneous calls, ensuring that after-hours inquiries and emergencies are addressed, eliminating the risk of missed opportunities.

The system integrates seamlessly with practice management systems and electronic health records. Even if a call is conducted in Spanish or French, the AI generates internal notes in English, ensuring a smooth workflow for staff. As Taha Tariq of Medlaunch puts it, "In today's global marketplace, language barriers are more than a minor inconvenience - they represent a revenue leak". With 20,375 offers pitched and 6,820 accepted, Answering Agent proves how accurate multilingual NLP can directly contribute to revenue growth for healthcare providers.

Conclusion: The Future of Multilingual Medical NLP

The field of multilingual medical NLP is advancing through the development of highly specialized, domain-focused models. These models, trained on resources like the MMedC dataset, are becoming increasingly adept at managing the complexities of multilingual medical terminology.

"The development of open-source, multilingual medical language models can benefit a wide, linguistically diverse audience from different regions." - Nature Communications

Recent strides in prompt-based learning techniques highlight the power of domain-specific approaches. This method has shown impressive results, achieving accurate Named Entity Recognition (NER) in languages such as Dutch, Italian, and Catalan, even when annotated data is limited. Studies now suggest that prompt-based learning can deliver near-human accuracy for clinical NER tasks across multiple languages.

From improved tokenization methods to better integration of medical knowledge, each innovation discussed is paving the way for multilingual medical NLP to become more dependable and practical. Moving away from English-only models toward truly multilingual systems opens up opportunities that go far beyond individual healthcare providers. Pharmaceutical companies, public health organizations, and biomedical researchers all stand to benefit from these advancements. As these technologies evolve, their incorporation into tools like Answering Agent is transforming patient communication by breaking down language barriers. This ensures that a diagnosis written in Spanish can be accurately interpreted by systems operating in Japanese or French, while also providing 24/7, precise support that improves patient access to care through accessible scheduling.

FAQs

How do NLP models avoid mistranslating medical false cognates?

Natural Language Processing (NLP) models tackle the challenge of mistranslating medical false cognates through several smart techniques. They use fuzzy matching to identify similar terms and reduce errors, while context-aware translation ensures that terms are interpreted correctly based on their surrounding text. Additionally, controlled terminology helps standardize medical language to avoid confusion.

To further improve accuracy, these models rely on medical ontologies - structured frameworks that define relationships between medical terms. This ensures that complex terms are consistently and correctly translated across languages, maintaining the precision needed for multilingual medical datasets.

What’s the best approach for medical NLP in low-resource languages?

Effective medical NLP for languages with limited resources often relies on a mix of clever strategies to bridge the gap. One popular method is neural machine translation (NMT) paired with annotation projection. This involves translating clinical texts from well-resourced languages and then mapping their annotations to the target language. Another approach is cross-lingual transfer (CLT), where multilingual models are fine-tuned on one language and then applied to others. By blending these techniques, it's possible to develop efficient and scalable clinical NLP tools while minimizing the need for extensive manual annotation.

How are medical terms mapped to the same concept across languages?

Cross-lingual medical entity normalization helps align medical terms across different languages. By using techniques like semantic embeddings generated by Large Language Models (LLMs), a shared multilingual embedding space is created. This allows clinical terms to be accurately translated and aligned, ensuring that medical procedures and terminology are consistently understood, no matter the language.

Related Blog Posts

See how AI handles calls for your business

Enter your business name and we'll build a personalized AI receptionist demo in under 2 minutes. Talk to it right in your browser.

No signup required · Free to try · Works for any business

Related Articles

Healthcare AI Phone Systems: Cost Breakdown

Flat-rate HIPAA-ready AI phone systems cut healthcare call costs, boost response times, and reduce missed patient leads.

AI Call Routing for Multi-Specialty Clinics

Compare top AI call routing platforms for multi-specialty clinics — accuracy, scalability, EHR integration, and cost.

Multilingual AI Telehealth: ROI for Medical Practices

Multilingual AI telehealth enhances patient care and reduces costs by overcoming language barriers, transforming healthcare practices financially and operationally.