GDPR Compliance for AI Voice Agents

If your business uses AI voice agents and interacts with EU residents, GDPR compliance is mandatory. This European privacy law applies globally if you process personal data of EU citizens. Here's what you need to know:

- Voice Data Is Personal Data: GDPR treats voice recordings and biometric voiceprints as sensitive data, requiring explicit consent and strict protection.

- Risks of Non-Compliance: Fines can reach up to €20 million or 4% of global revenue. In 2026, a US company was fined €85 million for improper AI data handling.

- Core Principles: Transparency, data minimization, and accountability are key. Collect only necessary data, notify users you're recording, and secure all information.

- Practical Steps: Use encryption, limit data access, automate deletion policies, and conduct regular Data Protection Impact Assessments (DPIAs).

- Vendor Compliance: Choose AI providers with GDPR-compliant contracts, clear data handling policies, and tools to manage user data rights.

Meeting GDPR standards protects your business from legal risks and builds customer trust. Start by ensuring your AI systems prioritize privacy and security from day one.

Is AI Voice HIPAA & GDPR Compliant? What You Need to Know.

sbb-itb-abfc69c

Core GDPR Principles for AI Voice Agents

To ensure your AI voice system complies with GDPR, focus on these three key principles. These guidelines not only help you avoid penalties but also establish trust by prioritizing user privacy from the start.

Lawfulness, Fairness, and Transparency

Your AI voice agent must clearly identify itself as an automated system at the beginning of a call and explain why it is collecting data. Include a disclosure that clarifies the call is automated and outlines its purpose. Additionally, you need a valid legal basis for processing voice data. Common options include explicit consent, contractual necessity, or legitimate interest. For biometric data, explicit consent is non-negotiable.

A significant 47% of compliance issues arise from the lack of informed consent. As Technova Partners highlights:

"The practice of chatbots that 'pretend to be human' explicitly violates the principle of lawfulness, fairness and transparency."

Failing to disclose the AI's identity can lead to serious regulatory violations. Beyond transparency, it’s essential to collect only the data that is absolutely necessary.

Data Minimization and Purpose Limitation

Collect only the information you need to fulfill a specific task. For example, if your AI is scheduling appointments, it doesn’t need the caller’s birth date or Social Security number. This principle of data minimization ensures that unnecessary data isn’t collected.

Purpose limitation means that data collected for one task cannot be reused for another without obtaining fresh consent. For instance, a phone number collected for appointment reminders cannot later be used for marketing without explicit permission. Progressive disclosure can help here - start interactions anonymously and request personal details only when absolutely required.

For sensitive data like credit card numbers, use DTMF masking, allowing users to input digits through their keypad without recording the information.

| Data Category | Retention Period | Legal Basis |

|---|---|---|

| Anonymous Conversation Logs | 30–90 Days | Legitimate Interest |

| Support Conversations (Ticketed) | 1–3 Years | Contract Performance |

| Sensitive Data (Health/Finance) | Immediate deletion after resolution | Explicit Consent / Legal Obligation |

| Marketing Leads | Until consent withdrawal | Explicit Consent |

Finally, ensure your system is accountable and secure.

Accountability and Security

GDPR Article 30 mandates keeping a Record of Processing Activities (ROPA). This log should document what data is collected, its purpose, who can access it, and the retention schedule.

To secure data, use encryption standards like TLS 1.3 for data in transit and AES-256 for data at rest. Implement Role-Based Access Control (RBAC) and Multi-Factor Authentication (MFA) to limit access. For multi-tenant systems, Row-Level Security (RLS) ensures that one client’s data remains inaccessible to others.

Set up automated retention policies to delete personal data after predefined periods - typically 30–90 days for logs and 1–3 years for support tickets. Automation reduces the risks of human error in data deletion.

Before deploying a voice agent that processes biometric data or monitors users extensively, conduct a Data Protection Impact Assessment (DPIA). This assessment identifies potential risks and outlines strategies to address them. As AgentiveAIQ notes:

"AI can drive ROI only if it operates within legal and ethical guardrails."

How to Achieve GDPR Compliance

To meet GDPR requirements, focus on gaining explicit consent, embedding privacy protections into your systems, and conducting thorough Data Protection Impact Assessments (DPIAs). In 2024, 73% of AI agent implementations in European companies revealed vulnerabilities in GDPR compliance. These measures help translate complex regulations into actionable steps for every interaction.

Getting User Consent

Your AI voice agent must secure explicit consent before using any personal data. This consent should involve a clear, affirmative action, such as saying "I accept" or clicking a consent button. For biometric data, like voiceprints - classified as "special category data" under GDPR - opt-in consent is non-negotiable.

To ensure transparency, automate a disclaimer at the start of every call: "This is an AI assistant. Your conversation will be recorded and processed according to our privacy policy." Transparency is key, especially since 62% of European consumers abandon chatbot interactions if they feel data use isn't clear.

Make withdrawing consent as simple as granting it. Include options like a "Revoke Data Consent" button or allow users to cancel via a verbal command during calls. Record every consent action with details like the date, time, and purpose. For example, if you collect a phone number for appointment reminders, you’ll need separate consent to use it for marketing. This approach helps maintain trust and aligns with GDPR’s transparency principles.

Building Privacy into Your System

Incorporate privacy into your system's design from the ground up. Use progressive disclosure, asking for user details only when necessary. For instance, an appointment scheduler doesn’t need a Social Security number or birth date to function.

Secure sensitive data with TLS 1.3 for data in transit and AES-256 for data at rest. Implement Role-Based Access Control (RBAC) combined with Multi-Factor Authentication (MFA) to limit access to voice recordings. For payment processing, use DTMF masking, which lets users input credit card details via their keypad, bypassing voice recordings entirely.

Set up automated data retention policies within your system. For example, delete anonymous conversation logs after 30–90 days and support tickets after 1–3 years. If you're a SaaS provider with multiple clients, apply Row-Level Security (RLS) to keep each client’s data securely separated.

Running Data Protection Impact Assessments

DPIAs are essential for identifying and managing risks tied to data processing. According to GDPR Article 35, a DPIA is required when processing activities could pose high risks to individuals. AI voice agents often fall into this category, especially when handling sensitive data or making critical decisions like credit scoring.

"DPIAs assist in detecting and mitigating risks affiliated with data processing tasks. Given the intricacy of AI systems and their potential effects on individuals' privacy, it's crucial for AI systems to undergo this analysis." – Exabeam

A comprehensive DPIA should cover four main areas: a detailed description of your data processing activities (what data is collected and why), an assessment of necessity to justify the data collection, the risks posed to user privacy, and the measures you’ve implemented to reduce those risks (e.g., encryption, access controls, and retention policies). Follow a checklist for implementing AI phone answering and conduct DPIAs during the system design phase, update them annually, and perform quarterly audits to adapt to any changes in your data flows. This documentation is crucial for demonstrating compliance during regulatory checks.

Selecting GDPR-Compliant AI Voice Agent Providers

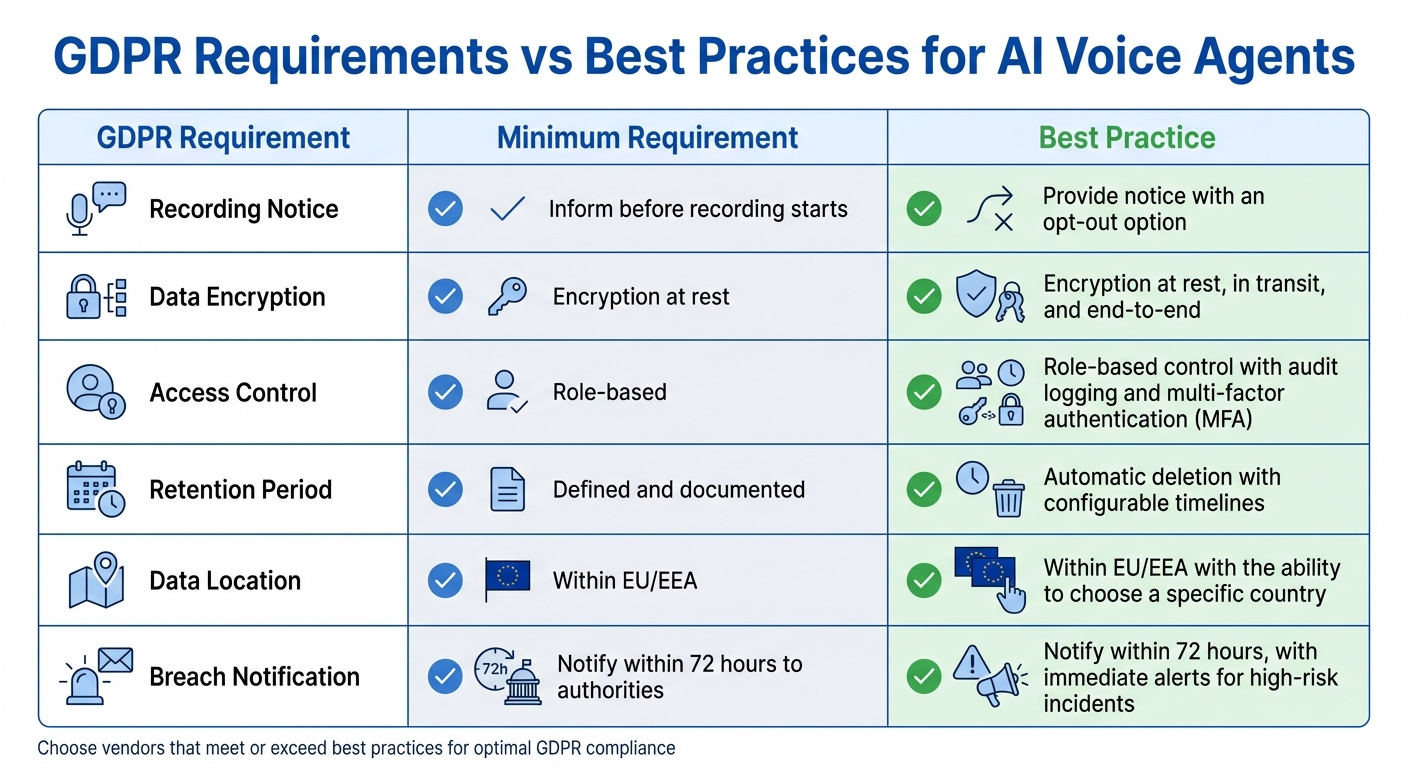

GDPR Compliance Requirements vs Best Practices for AI Voice Agents

When choosing an AI voice agent provider, it's essential to ensure they offer a GDPR-compliant Data Processing Agreement. This formal contract, required under GDPR Article 28, outlines how data is handled, the security measures in place, and breach notification protocols. If a vendor cannot provide this agreement, it’s a major red flag.

Vendor Evaluation Checklist

Start by confirming data residency. The vendor should store and process data within the EU/EEA to avoid complications with cross-border data transfers. Don’t just rely on marketing claims - request the vendor's SOC 2 Type II report to verify their security controls meet industry standards.

Transparency is key. Ensure the vendor discloses all third-party sub-processors, whether for cloud infrastructure, telephony, or language model APIs. Each sub-processor should adhere to strict data protection guidelines. Additionally, inquire about their model training policies. GDPR-conscious vendors should allow you to opt out of data use for training or guarantee anonymization beforehand.

Here’s a quick comparison of GDPR requirements and best practices:

| GDPR Requirement | Minimum Requirement | Best Practice |

|---|---|---|

| Recording Notice | Inform before recording starts | Provide notice with an opt-out option |

| Data Encryption | Encryption at rest | Encryption at rest, in transit, and end-to-end |

| Access Control | Role-based | Role-based control with audit logging and multi-factor authentication (MFA) |

| Retention Period | Defined and documented | Automatic deletion with configurable timelines |

| Data Location | Within EU/EEA | Within EU/EEA with the ability to choose a specific country |

| Breach Notification | Notify within 72 hours to authorities | Notify within 72 hours, with immediate alerts for high-risk incidents |

Additionally, ensure the system supports data subject rights. This includes the ability to identify, export, and delete all data linked to an individual within the GDPR-required 30-day timeframe.

Answering Agent: A GDPR-Compliant Solution

One provider that checks all the boxes is Answering Agent. This platform specializes in AI phone answering for service businesses, including home services, medical practices, law firms, staffing agencies, and car washes. Its privacy-first design ensures secure data handling and transparency.

Answering Agent has processed over 17,724 scored calls with an impressive 99.93% accuracy rate. Operating 24/7 with a natural voice, it handles unlimited simultaneous calls, books appointments, captures leads, and ensures no calls are missed - even during peak times.

The platform uses industry-standard encryption, including AES-256 for data at rest and TLS 1.3 for data in transit. It also offers configurable recording notices that inform callers at the start of every interaction. For GDPR compliance, Answering Agent supports automated retention policies and provides tools to manage data subject rights, such as access, correction, and deletion requests.

On top of its robust privacy features, Answering Agent offers transparent pricing with no unpredictable per-minute charges, making it a cost-effective alternative to human receptionists.

Maintaining GDPR Compliance Over Time

Staying compliant with GDPR is not a one-and-done task - it requires ongoing effort and vigilance. By early 2026, 84% of organizations admitted they couldn’t pass an AI agent compliance audit. This is largely because AI voice agents are constantly evolving through updates, new features, and model upgrades - each of which can introduce new compliance risks.

Regular Compliance Monitoring and Audits

To keep up, organizations should perform quarterly reviews to catch configuration drift or unauthorized access. These audits are crucial for verifying that consent mechanisms work as intended, encryption protocols are active, and automated deletion processes are functioning properly.

Here’s a breakdown of effective monitoring practices:

| Monitoring Activity | Frequency | Key Objective |

|---|---|---|

| Vulnerability Scans | Quarterly | Identify and address weaknesses in infrastructure |

| Red Teaming | Quarterly | Test for vulnerabilities like prompt injection |

| DPIA Review | Annually | Reassess risks tied to biometric data processing |

| Tabletop Exercises | Semi-Annually | Simulate breaches and test incident response plans |

| Knowledge Base Audit | Periodic | Remove PII from indexed content |

One standout practice is quarterly red teaming, where specialized teams test for vulnerabilities like data leakage or prompt injection. This is especially important because 96% of GDPR fines are linked to poor data governance, not malicious actions.

Before rolling out any new AI agent feature, conduct a "mini privacy review" to avoid accumulating privacy debt. Similarly, semi-annual tabletop exercises can help refine your response to potential data breaches or unusual agent behavior.

Another critical task is auditing your AI agent’s knowledge base for exposed PII. Even one mistakenly indexed customer record could trigger a reportable breach.

These proactive measures not only protect your current operations but also help you adapt to future regulatory changes.

Preparing for Regulatory Changes

Adapting to new regulations is just as important as maintaining existing compliance. For example, the EU AI Act’s transparency and high-risk obligations will be fully enforced starting August 2, 2026. While most customer service AI agents fall into the "limited risk" category - requiring only that users are informed they’re interacting with AI - agents involved in hiring, credit, or legal decisions are classified as "high risk" and require detailed documentation.

To stay ahead, keep a systematic inventory of all AI agents in your organization. This inventory should include details like each agent’s capabilities, its risk classification under the EU AI Act, and the designated human owner. Such a registry will make it easier to identify and update systems as regulations evolve.

Stay informed by subscribing to updates from your local Data Protection Authority and relevant industry groups. In the U.S., state-level privacy laws are increasingly adopting a "strictest standard" approach. For instance, Illinois has stringent requirements for managing voice biometric data. Under GDPR, voiceprints are often considered "special category" biometric data, which means they require explicit consent and strong security measures.

To prepare for these challenges, design your systems with flexibility in mind. One way to do this is by using hash-chained audit trails, where each log entry references the previous one, making records tamper-evident for regulators. As Salesix AI put it:

"Compliance isn't a feature you add later - it's architectural." – Salesix AI

Finally, establish human-in-the-loop protocols for decisions with high stakes or emotional complexity. With stricter AI regulations on the horizon, demonstrating human oversight is becoming more critical - especially as GDPR fines for mishandling voice data jumped 40% year-over-year in 2025.

Conclusion

Meeting GDPR requirements for AI voice agents isn't optional - it protects both your users and your business. With 72% of GDPR violations tied to unlawful data processing and penalties reaching up to €20 million or 4% of global revenue, the stakes are simply too high to overlook.

Here’s what you need to do: ensure a lawful basis for processing voice data, clearly notify users that they’re interacting with AI, limit data collection to what’s absolutely necessary, and set up automated deletion policies (usually within 30–90 days). You’ll also need a Data Processing Agreement with your AI vendor, tools to support data subject rights like access and erasure, and human oversight for decisions with major consequences.

Taking these steps not only keeps you compliant but also strengthens customer trust. Transparency and security are more than just regulatory requirements - they’re competitive advantages. In fact, 72% of EU consumers say they’d stop using a service over privacy concerns. As Technova Partners puts it:

"Security and privacy are not regulatory overhead, they are competitive advantage that builds trust"

With new regulations like the EU AI Act on the horizon, staying compliant means keeping an eye on the evolving legal landscape. Regular audits, automated compliance tools, and a privacy-first approach will help ensure your systems meet both current and future standards.

If you’re looking for a GDPR-compliant solution, Answering Agent (https://answeringagent.com) offers a built-in compliance framework. For $199 per month, you get unlimited calls, 99.93% accuracy across over 17,724 scored calls, and 24/7 availability. Whether you’re in home services, healthcare, or legal fields, choosing a provider that prioritizes compliance as a core feature - not an afterthought - is essential.

FAQs

Do AI voice calls always need explicit consent under GDPR?

Under GDPR, explicit consent isn't always mandatory for AI voice calls. However, it's essential to maintain transparency and ensure the AI's identity is clearly communicated to the individual. That said, when personal data is being collected, obtaining explicit consent is highly advisable to align with GDPR requirements.

How can I handle payments or sensitive info without recording it?

To manage payments or sensitive information responsibly, your AI voice agent should clearly identify itself and obtain explicit user consent before collecting any sensitive data. Only gather the information required for the specific task at hand - nothing more. It's also critical to offer users the ability to access, correct, or delete their data. This aligns with GDPR principles, minimizes unnecessary data storage, and helps avoid potential legal complications.

What should I ask for in a vendor’s GDPR Data Processing Agreement (DPA)?

When you're examining a vendor’s GDPR Data Processing Agreement (DPA), make sure it spells out the critical compliance details, such as:

- Purpose and scope of data processing: What data is being processed and why.

- Security measures and sub-processor responsibilities: How data is protected and the obligations of any third parties involved.

- Data retention and deletion policies: How long data is kept and the procedures for securely deleting it. Also, check for details on cross-border data transfers.

- Support for GDPR obligations: This includes processes for breach notifications and allowing audits.

These points are essential for ensuring the vendor handles personal data responsibly and adheres to GDPR standards.

Related Blog Posts

See how AI handles calls for your business

Enter your business name and we'll build a personalized AI receptionist demo in under 2 minutes. Talk to it right in your browser.

No signup required · Free to try · Works for any business

Related Articles

Ultimate Guide to AI Call Quality Metrics

Comprehensive guide to measuring and improving AI call performance: telephony, ASR, LLM, TTS, latency, CSAT, and containment benchmarks.

Missed Calls Impact Multichannel CX

Missed calls break customer journeys, costing sales and loyalty; AI answering services provide 24/7 call handling and CRM sync.

AI Call Handling for Hotels in Peak Season

Reduce missed calls and recover bookings during peak season with AI that answers routine inquiries, integrates with PMS, and saves costs.